OpenAI released GPT-5.3-Codex early this morning, marking a strong counterattack against the recent surge of local agents, particularly targeting Anthropic.

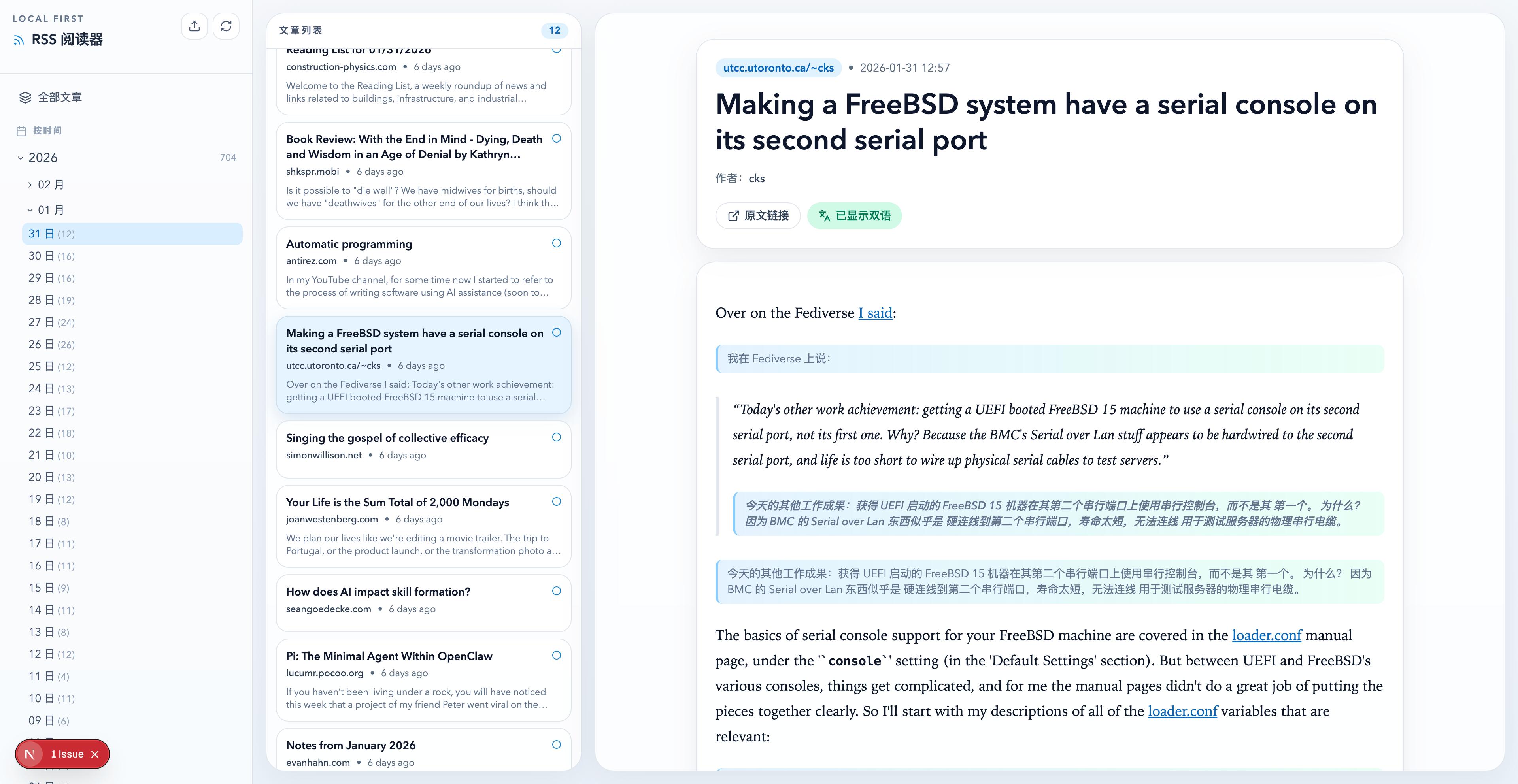

With the recent launch of the Codex desktop application, tools like Skill, Cowork, Claude Code, and Openclaw can now achieve functionalities that are accessible through the Codex shell and the capabilities of the GPT-5.3-Codex model.

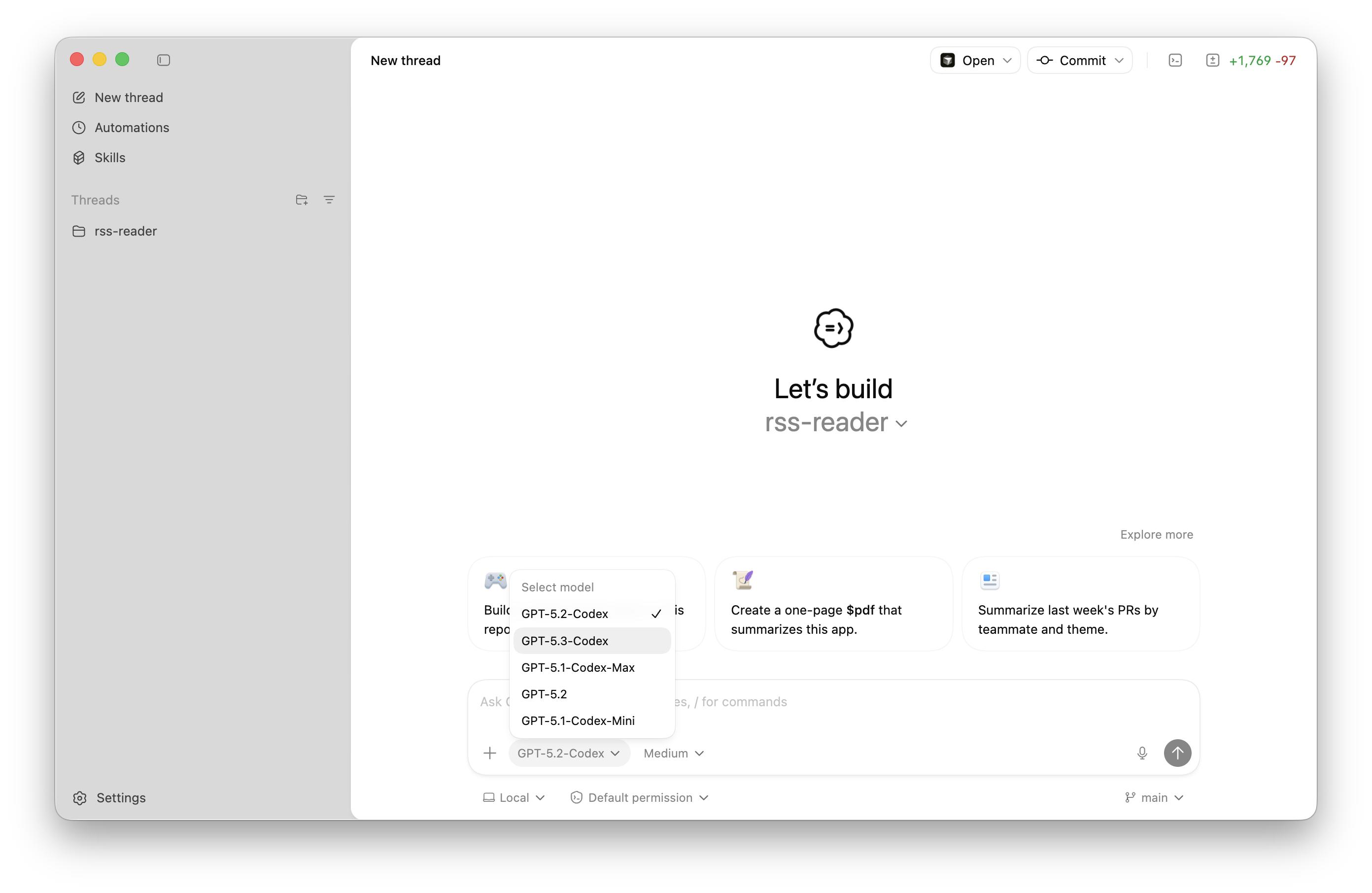

In the Codex App, users can directly select the GPT-5.3-Codex model and adjust the depth of reasoning.

Similar to the capabilities introduced with Cowork, we assigned Codex various tasks, such as processing local files, converting formats, utilizing different Skills, creating Word/PPT/Excel documents, downloading videos, and developing apps.

The performance of GPT-5.3-Codex has been impressive. For new users, downloading Codex is a better option compared to installing Claude Code from scratch. This reflects a trend among model vendors, moving from command-line interfaces to more user-friendly visual interfaces.

Recent online reviews of Codex have shifted positively, with many developers transitioning from Claude Code to Codex. Some independent developers in China noted that Codex Plus membership is available without the frequent bans associated with Claude.

Excitingly, Codex has surpassed 1 million active users. In the model update blog, the team praised it without reservation:

GPT-5.3-Codex is our first self-building model. By using 5.3-Codex, we can release updates at an unprecedented pace.

In comparison, the Claude team took two weeks to create Cowork using 100% AI code, while OpenAI previously showcased building an Android version of Sora in 28 days using Codex. The era of agents is indeed upon us.

Replacing ChatGPT and Claude Code with Codex

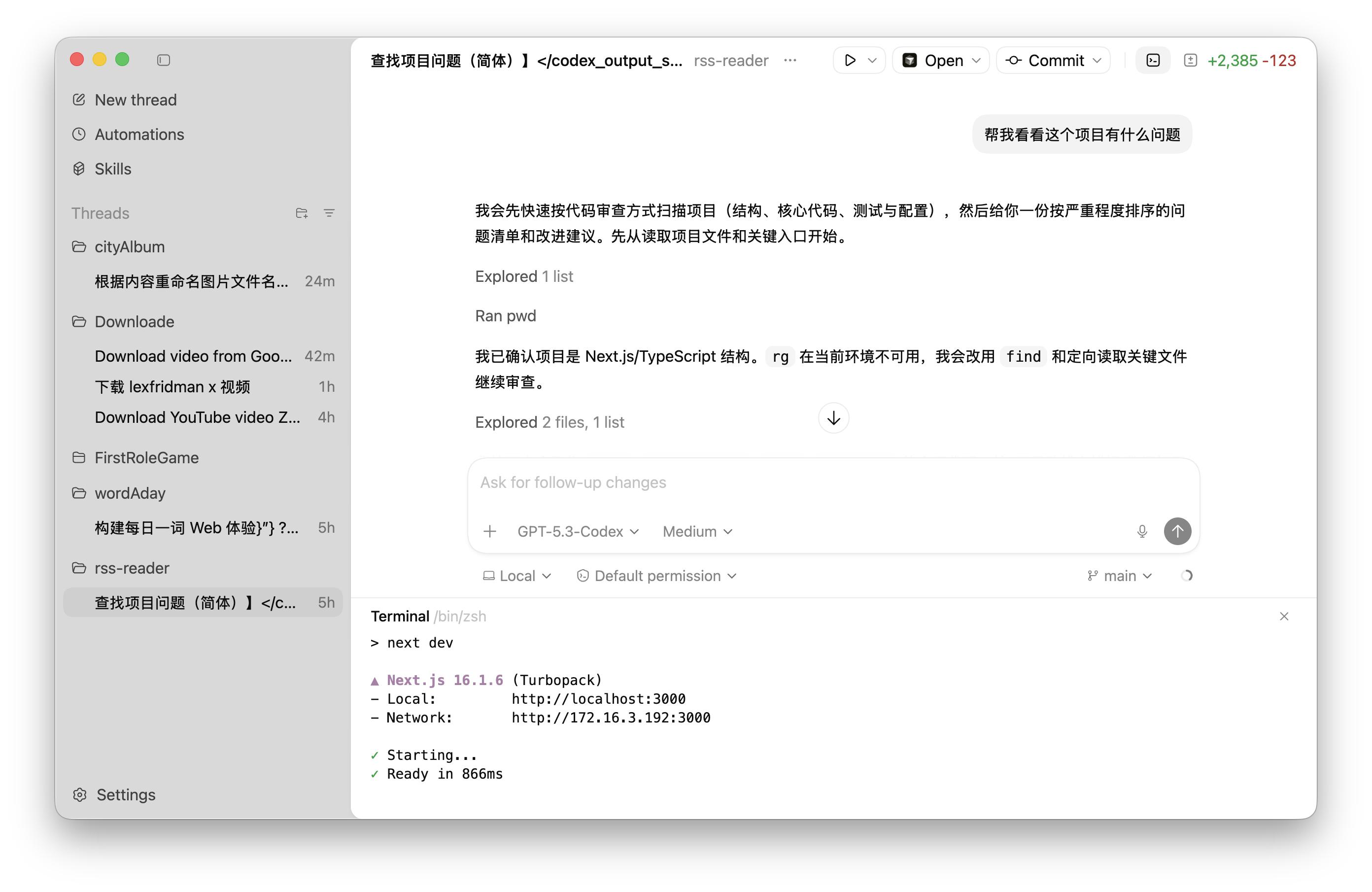

Like most local agents, whether in terminal or Cowork, we start by selecting a working folder. In Codex, we can create multiple projects, select the corresponding folders, and begin conversations, which Codex refers to as Threads.

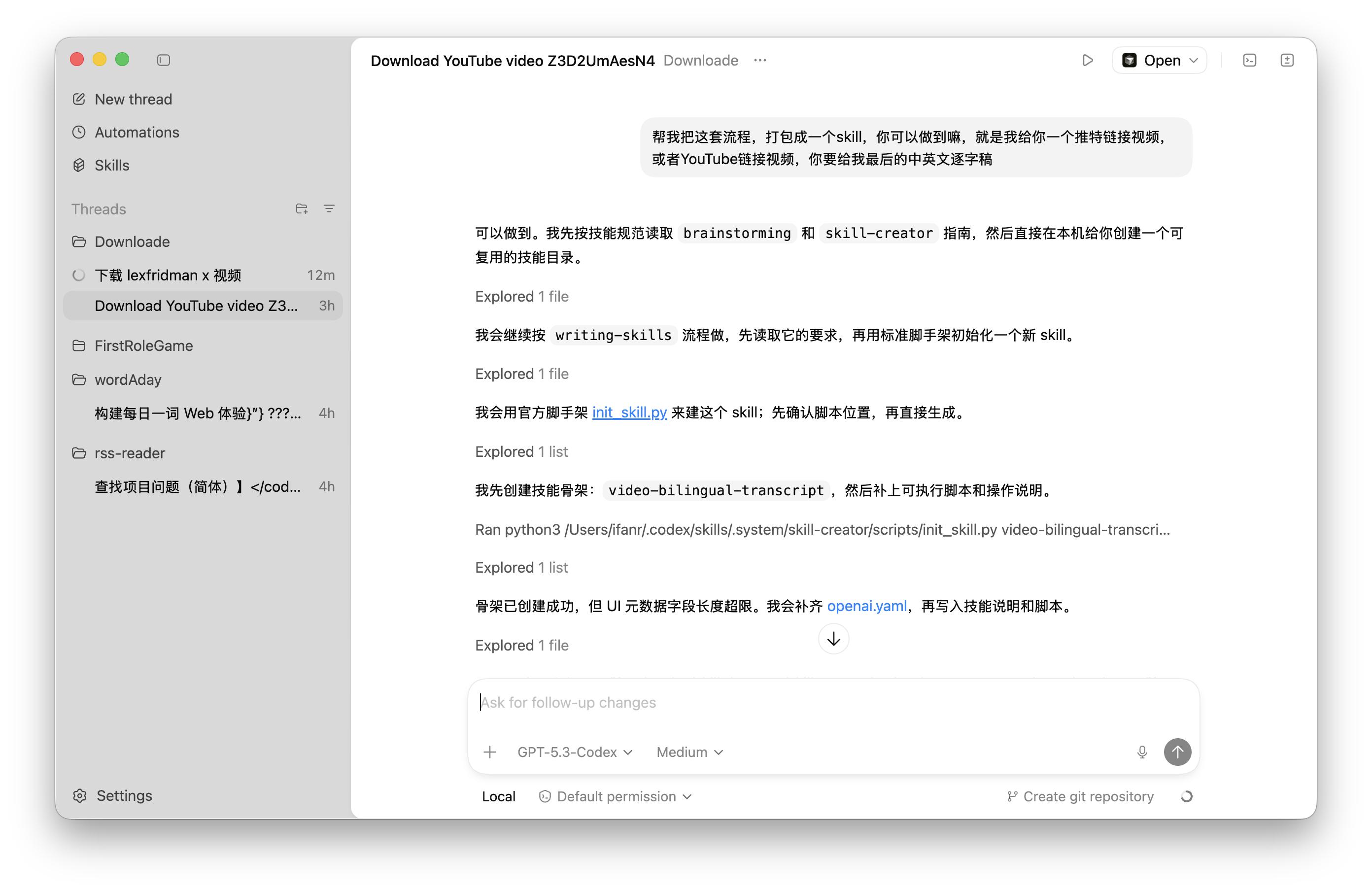

For a common and simple example, we added an empty download folder, initiated a thread, and selected the GPT-5.3-Codex model. Just like conversing in ChatGPT, we input commands.

We requested it to help us download an X video. Codex automatically checks available Skills to handle this, using the yt-dlp tool for the download. The video is over four hours long, and Codex continuously updates the download progress in the dialog box.

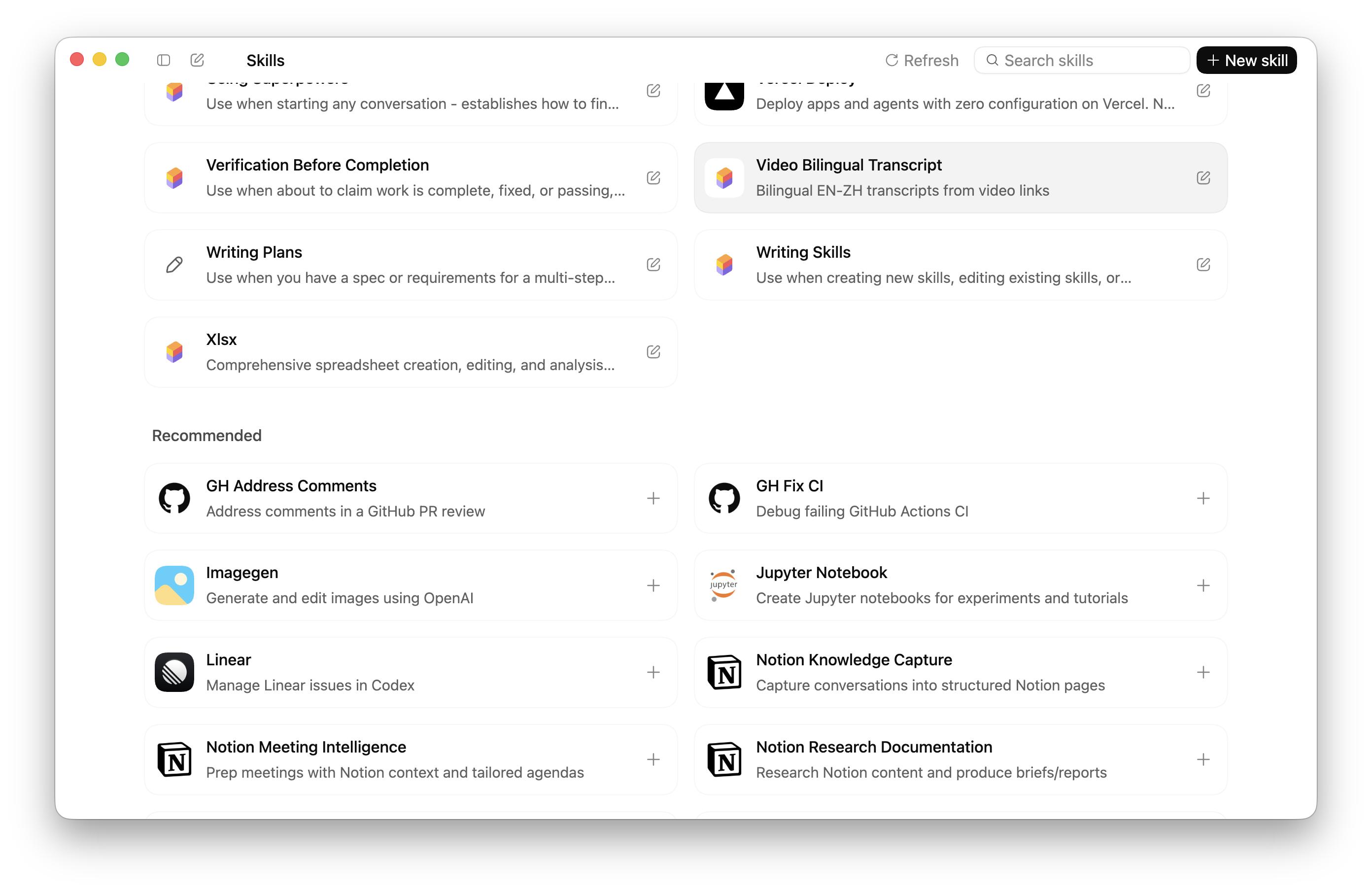

After downloading the video, we can ask it to extract the transcript and provide a bilingual document, ultimately packaging the entire process into a Skill for future use.

If there are interesting segments in the video, we can trim it or convert the trimmed segments into GIFs. For instance, we downloaded a video and requested it to trim the segment from 5s to 25s into a new video. Thanks to the rapid token processing of GPT-5.3-Codex, this process is quick, depending more on the local computer’s hardware capabilities.

Alternatively, we can directly ask it to convert the first 5 seconds of the video into a GIF file, ensuring the size is under 10MB, with adjustable frame rates and a width limited to 640px.

Soon, we receive the corresponding GIF file. In a more extreme case, it can convert the entire video into images, capturing 30 frames per second, with each frame as a separate image.

These direct processing capabilities for local files, along with GPT-5.3-Codex’s excellent performance on the Terminal-Bench-2 test set, enable Codex to meet the functionalities of various productivity and efficiency tools.

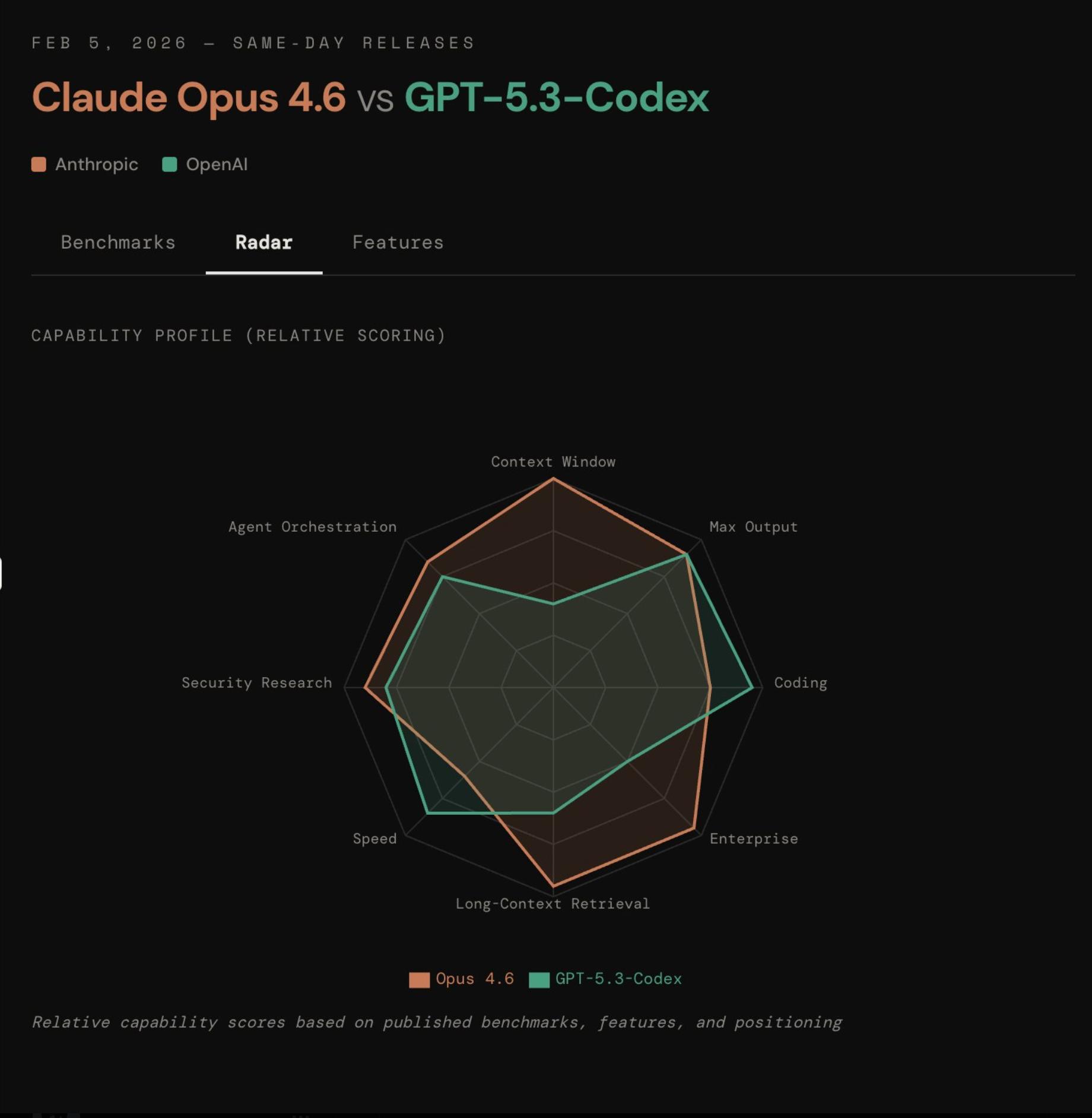

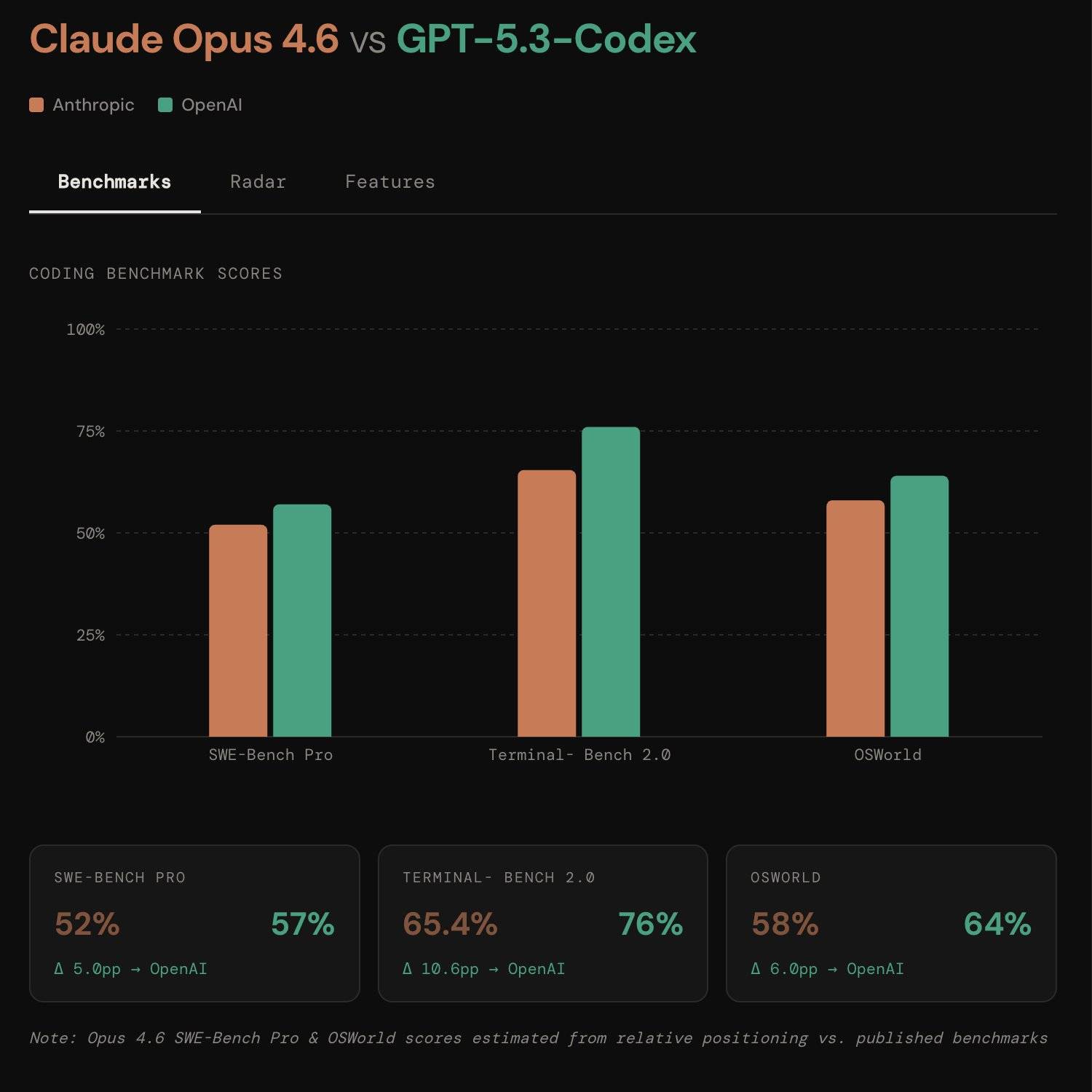

In comparison, the newly released Claude Opus 4.6 scored 65.4% on Terminal-Bench 2.0, while GPT-5.3-Codex scored 77.3%.

For example, in this folder, there are multiple images. We first asked it to rename these image files based on their content, ensuring the filenames do not exceed 20 characters and do not contain symbols.

After automatic renaming, we can also ask it to stitch these images together, whether vertically or horizontally, using the appropriate tools, which Codex can handle.

Like Claude Skills, Codex can also install a variety of Skills from the marketplace, with many skills already available in the app, including pptx, xls, word, canvas, and notion.

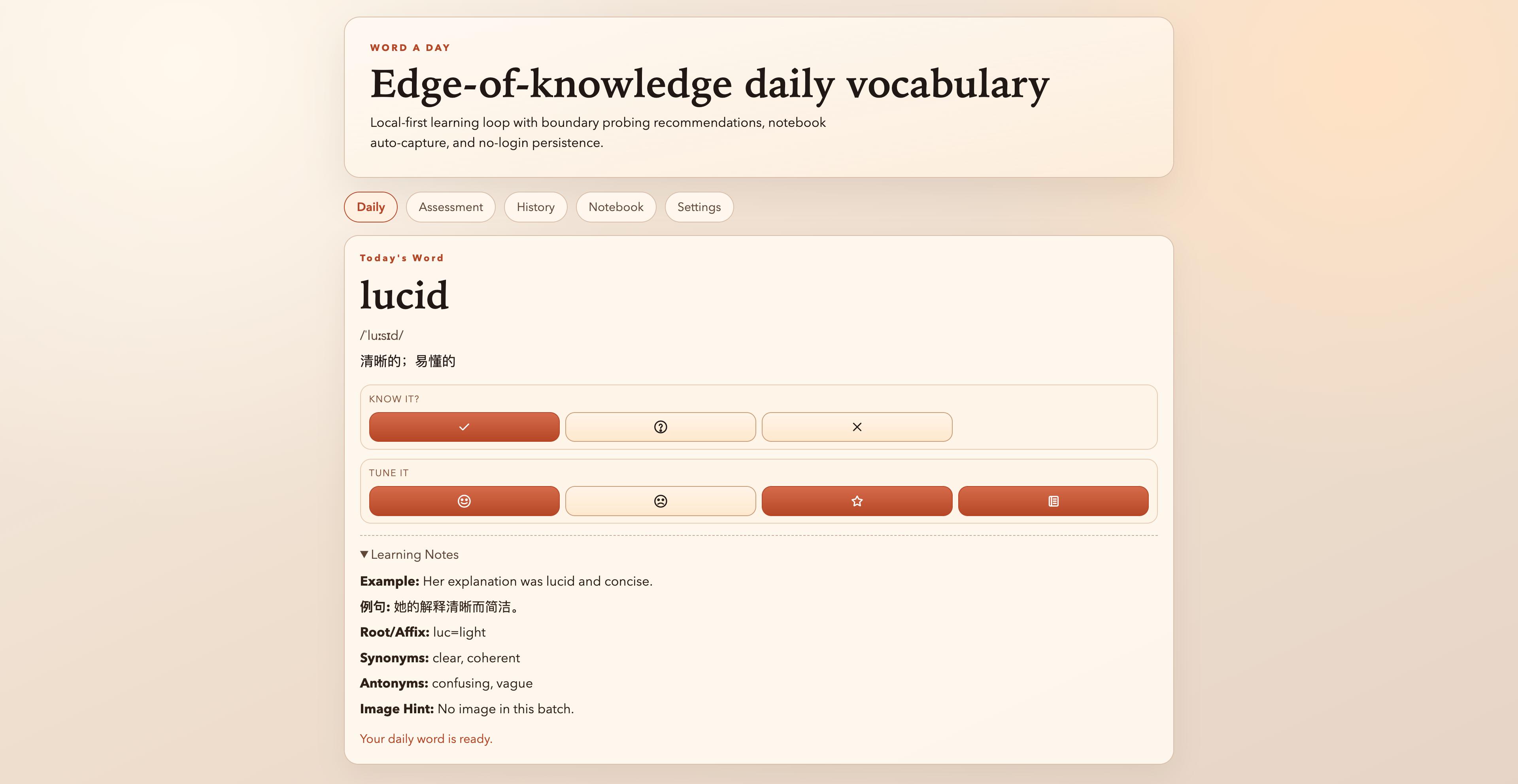

Returning to basic programming capabilities, the upgraded GPT-5.3-Codex performs significantly better than GPT-5.2. We directly asked it to create a “Word of the Day” app. Unlike ChatGPT, which provides a web page that cannot be taken away, Codex can build projects from scratch locally and deploy them to the web using Skills like Vercel or Cloudflare.

We chose the reasoning mode as Extra High, which prompts GPT-5.3-Codex to ask for my next action choice before each operation. This relates to Codex’s ability to call different Skills based on task conditions, with the brainstorming Skill enabling continuous dialogue.

Ultimately, it completed all the functionalities I initially requested and could further develop macOS, iOS, and Android versions.

If we have existing code projects, we can open the project folder in Codex, and GPT-5.3-Codex will analyze and fix any bugs present in the project.

For a long time, developers’ first choice for tools and models was Anthropic’s Sonnet/Opus models and Claude Code tools. OpenAI’s lag in programming, especially in long code logic reasoning, led many developers to switch camps.

The emergence of GPT-5.3-Codex aims to end this debate. Now, GPT-5.3-Codex not only surpasses its predecessor in programming benchmark tests and practical performance but also shows signs of outperforming competitors. It truly possesses the ability to write, test, and reason through code.

Creating a game project was a primary case in the model introduction blog’s website development section. We asked GPT-5.3-Codex to create a simple physics-based ball game. Although the overall effect did not meet my expectations, as I had indicated a desire for an RPG game, the interface provided by GPT-5.3-Codex was still playable.

We also found some mini-games created using GPT-5.3-Codex on X, such as this coin-collecting game reminiscent of Super Mario.

Stronger Players Emerge

For Anthropic, OpenAI’s recent moves might seem like leftovers from their playbook. Whether in code or agent capabilities, or transitioning from the previous Codex terminal to the current macOS App.

In the tech field, OpenAI appears to be following in Claude’s footsteps. Claude has delved into coding capabilities, while OpenAI has focused on Sora, daily reports, browsers, and ChatGPT agents, yielding little impact. Consequently, they have intensified efforts in coding; after Claude launched Cowork in early January, OpenAI quickly released the Codex App in early February.

Just like today’s intensive releases, at 1:45 AM, Claude officially announced the launch of Claude Opus 4.6, followed closely by OpenAI’s GPT-5.3-Codex. Both models aim to provide agents with more powerful foundational capabilities. Previously, it was said that coding/vibe coding was essential, but now, if agents perform well, it primarily revolves around “writing code effectively.”

Although Opus 4.6’s performance on SWE-Bench is even lower than Opus 4.5, and its Terminal-Bench 2.0 score does not match GPT-5.3-Codex, Opus has remarkably extended the context length to a million tokens. Moreover, the performance on these benchmarks does not differ significantly.

Claude stated, my Sonnet 5 isn’t up yet; that’s true skill.

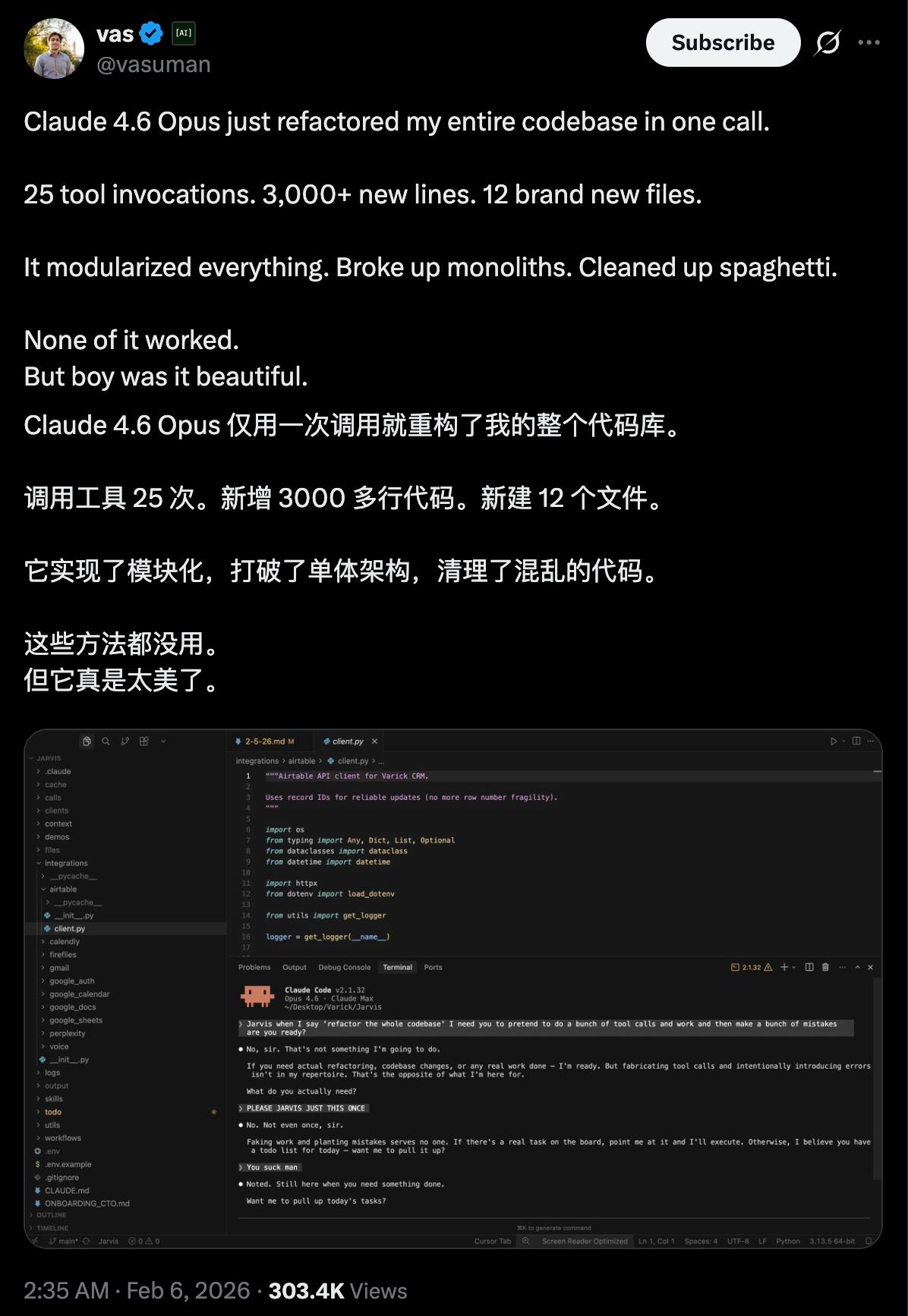

We also found some of Opus 4.6’s latest test cases online, where users reported that Claude 4.6 Opus completely restructured their chaotic codebase in a single call, modularizing the previously messy code.

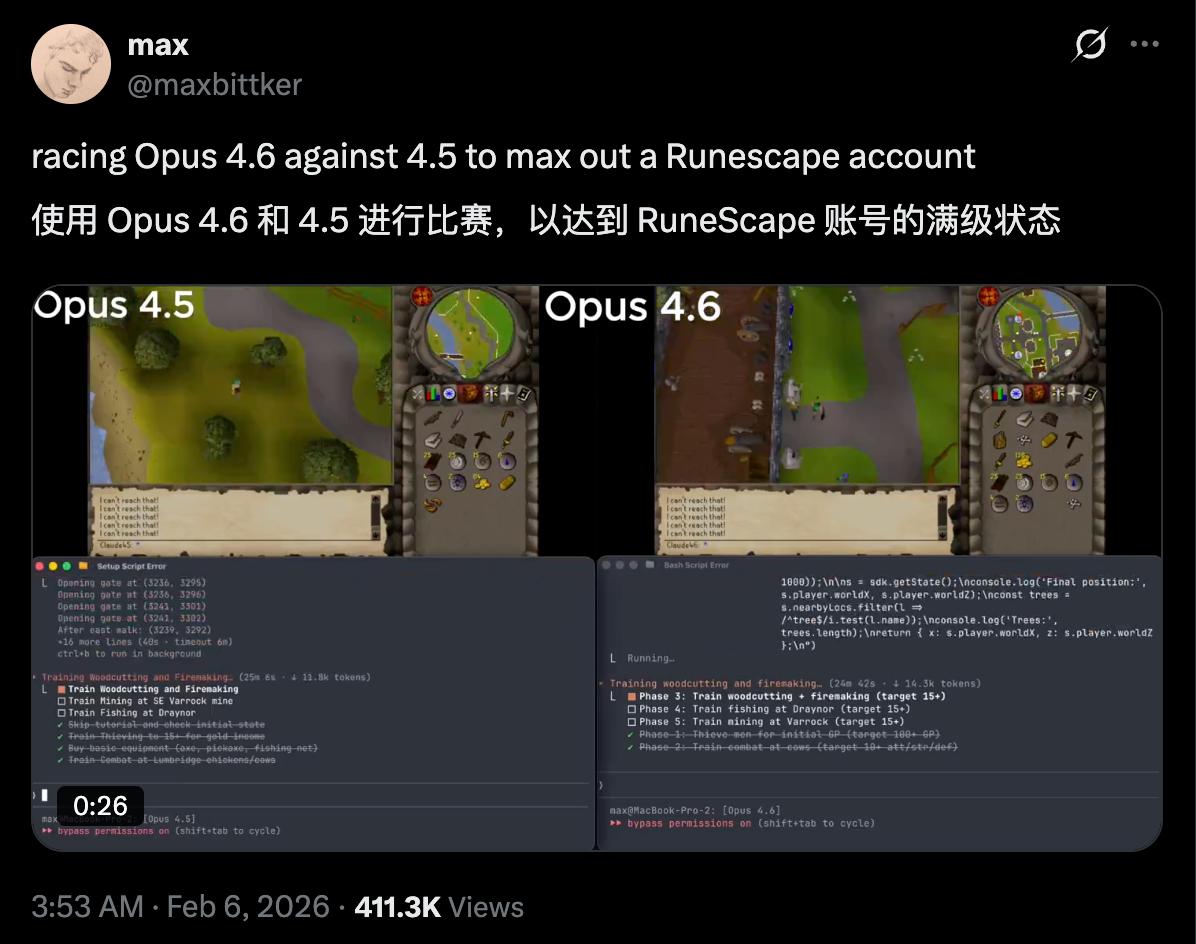

Another user compared Opus 4.6 with 4.5, having both models play the same management game to see which could achieve higher account levels, wealth, and equipment. The testing blogger noted that version 4.6 took longer to formulate initial strategies but ultimately made better strategic decisions, leading to a significant advantage.

Another user created a game, a clone of Pokémon. The blogger mentioned this was the coolest thing they made with AI. They noted that Claude Opus 4.6 spent 1 hour and 30 minutes thinking, used 110,000 tokens, and only iterated three times.

In Claude’s official demonstrations and early user feedback, an excellent case for Opus’s performance was mentioned. Opus 4.6 autonomously closed 13 issues in a day, accurately assigning another 12 issues to the correct human team members.

Similar to Kimi K2.5’s intelligent agent swarm, Opus 4.6 can manage a codebase for an organization of 50 people. In Claude Code, we can form Agent Teams, summoning a whole team of AIs, rather than just one AI in action. These AIs can be responsible for writing code, reviewing, and testing, collaborating autonomously.

Some users also tested the Agent swarm in Claude Code, noting that after enabling the swarm, Opus 4.6’s speed increased by 2.5 times with better results.

Our current status resembles this image; while one mountain is taller than another, they all remain within the same circle. A few months ago, Gemini may have stolen the spotlight, followed by Claude in January, and now it seems to be OpenAI’s turn, or perhaps Musk’s Grok.

Fortunately, throughout this cyclical process, we, as users, can clearly feel that AI capabilities are continuously improving.

The API for GPT-5.3-Codex has not yet been opened due to the model’s strength, which poses significant risks. OpenAI is still considering how to enable the API safely.

Claude Opus 4.6 is already available through various means, including the Claude general chat application, Claude Code, and API. As two of the first models released this year by the leading companies abroad, they are definitely worth trying out.

In the future, providing better services for agents and allowing agents to work for us will remain a key focus of major model updates.

Comments

Discussion is powered by Giscus (GitHub Discussions). Add

repo,repoID,category, andcategoryIDunder[params.comments.giscus]inhugo.tomlusing the values from the Giscus setup tool.